I’m never sure whether to post such things here, but I hope that it’s of interest to people: I’m trying to hire a top-notch Linux person for a 100% telecommute position. I’m particularly interested in people with experience managing 500 or more OS instances. It’s a shop with a lot of Debian, by the way. You can apply at that URL and mention you saw it in my blog if you’re interested.

Debian – A plea to worry about what matters, and not take ourselves too seriously

I posted this on debian-devel today. I am also posting it here, because I believe it is important to more than just Debian developers.

Good afternoon,

This message comes on the heels of Sam Hartman’s wonderful plea for compassion [1] and the sad news of Joey Hess’s resignation from Debian [2].

I no longer frequently post to this list, but when you’ve been a Debian developer for 18 years, and still care deeply about the community and the project, perhaps you have a bit of perspective to share.

Let me start with this:

Debian is not a Free Software project.

Debian is a making-the-world-better project, a caring for people project, a freedom-spreading project. Free Software is our tool.

As many of you, hopefully all of you, I joined Debian because I enjoyed working on this project. We all did, didn’t we? We joined Debian because it was fun, because we were passionate about it, because we wanted to make the world a better place and have fun doing it.

In short, Debian is life-giving, both to its developers and its users.

As volunteers, it is healthy to step back every so often, and ask ourselves two questions: 1) Is this activity still life-giving for me? 2) Is it life-giving for others?

I have my opinions about init. Strong ones, in fact. [3] They’re not terribly relevant to this post. Because I can see that they are not really all that relevant.

14 years ago, I proposed what was, until now anyhow, one of the most controversial GRs in Debian history. It didn’t go the way I hoped. I cared about it deeply then, and still care about the principles.

I had two choices: I could be angry and let that process ruin my enjoyment of Debian. Or I could let it pass, and continue to have fun working on a project that I love. I am glad I chose the latter.

Remember, for today, one way or another, jessie will still boot.

18 years ago when I joined Debian, our major concerns were helping newbies figure out how to compile their kernels, finding manuals for monitors so we could set the X modelines properly, finding some sort of Free web browser, finding some acceptable Office-type software.

Wow. We WON, didn’t we? Not just Debian, but everyone. Freedom won.

I promise you – 18 years from now, it will not matter what init Debian chose in 2014. It will probably barely matter in 3 years. This is not key to our goals of making the world a better place. Jessie will still boot. I say that even though my system runs out of memory every few days because systemd-logind has a mysterious bug [4]. It will be fixed. I say that even though I don’t know what init system it will use, or how much choice there will be. I say that because it is simply true. We are Debian. We will make it work, one way or another.

I don’t post much on this list anymore because my personal passion isn’t with posting on this list anymore. I make liberal use of my Delete Thread keybinding on -vote these days, because although I care about the GR, I don’t care about it enough to read all the messages about it. I have not yet decided if I will spend the time researching it in order to vote. Instead of debating the init GR, sometimes I sit on the sofa with my wife. Sometimes I go out and fly the remote-control airplane I’m learning to fly. Sometimes I repair my plane after a flight that was shorter than planned. Sometimes I play games with my boys, or help them with homework, or share my 8-year-old’s delight as a text file full of facts about the Titanic that he wrote in Emacs comes spitting out of the printer. Sometimes I write code or play with the latest Linux filesystems or build a new server for my basement.

All these things matter more to me than init. I have been using Debian at home for almost 20 years, at various workplaces for almost that long, and it is not going to stop being a part of my life any time soon. Perhaps I will have to learn how to administer a new init system. Well, so be it; I enjoy learning new things. Or perhaps I will have to learn to live with some desktop limitations with an old init system. Well, so be it; it won’t bother me much anyhow. Either way, I’m still going to be using what is, to me, the best operating system in the world, made by one of the world’s foremost Freedom projects.

My hope is that all of you may also have the sense of peace I do, that you may have your strong convictions, but may put them all in perspective. That we as a project realize that the enemy isn’t the lovers of the other init, but the people that would use law and technology to repress people all over the world. We are but one shining beacon on a hill, but the world will be worse off if our beacon winked out.

My plea is that we each may get angry at what matters, and let go of the smaller frustrations in life; that we may each find something more important than init/systemd to derive enjoyment and meaning from. [5] May you each find that airplane to soar freely in the skies, to lift your soul so that the joy of using Free Software to make the world a better place may still be here, regardless of what /sbin/init is.

[1] https://lists.debian.org/debian-project/2014/11/msg00002.html

[2] https://lists.debian.org/debian-devel/2014/11/msg00174.html

[3] A hint might be that in my more grumpy moments, I realize I haven’t ever quite figured out why the heck this dbus thing is on so many of my systems, or why I have to edit XML to configure it… ;-)

[4] #765870

[5] No disrespect meant to the init/systemd maintainers. Keep enjoying what you do, too!

Being Different

This evening, after the boys were in bed, Laura and I sat down to an episode of MASH (a TV series from the 70s) and leftover homemade pumpkin bars. She commented, “Sometimes I wonder what generation we’re in. This doesn’t seem to be something people our age are usually doing.” Probably true. I suppose people my age aren’t usually learning to play the penny whistle or put up antennas in trees either.

We’ve had a fun day today – a different sort of day in a lot of ways. We took the boys for their first Wichita Symphony Orchestra experience — they were doing their first-ever “family concert” (Beethoven Lived Upstairs, which combined Beethoven’s music with a two-person play aimed at kids). And they had an “instrument petting zoo” beforehand. Both boys loved it.

|

| From November 8, 2014 |

After that, we took them to a sushi place for the first time. We ordered different types of rolls for our table, encouraging them to start with the California roll. They loved it (though Oliver did complain it was a bit hard to eat). Jacob happily devoured everything he could that wasn’t spicy. He would have probably devoured the plate of California roll slices by himself if I hadn’t stopped him and encouraged him to slow down and try some other things too.

It doesn’t seem very common around here to take 5-year-olds to a sushi place and plan on them eating the same sort of food that the adults around them are. It is a lot of fun to be different. Jacob and Oliver both have their unique personalities and interests, and I hope that they continue to find strength and joy in all the ways they are unique.

Halloween: A Pumpkin and an Insect Matador

You never quite know what to expect with children. For Halloween this year, Laura found some great costumes at a local thrift store. Jacob loved his “matador” costume, with a cape and vest. He had fun swishing the cape around him. But he didn’t want to use the nice hat with a red flower in it that Laura found. Nope. What he wanted was the hat with plastic springy things that she got on a lark – he said it was “insect antennae” and that he was an “insect matador”. This prompted some confused looks and big smiles from the people he saw when we went trick-or-treating!

Oliver, meanwhile, enjoyed his pumpkin outfit, complete with orange hair – his favorite part.

Here’s a typical scene:

And, of course, Jacob running with the cape flowing behind him:

Update on the systemd issue

The other day, I wrote about my poor first impressions of systemd in jessie. Here’s an update.

I’d like to start with the things that are good. I found the systemd community to be one of the most helpful in Debian, and #debian-systemd IRC channel to be especially helpful. I was in there for quite some time yesterday, and appreciated the help from many people, especially Michael. This is a nontechnical factor, but is extremely important; this has significantly allayed my concerns about systemd right there.

There are things about the systemd design that impress. The dependency system and configuration system is a lot more flexible than sysvinit. It is also a lot more complicated, and difficult to figure out what’s happening. I am unconvinced of the utility of parallelization of boot to begin with; I rarely reboot any of my Linux systems, desktops or servers, and it seems to introduce needless complexity.

Anyhow, on to the filesystem problem, and a bit of a background. My laptop runs ZFS, which is somewhat similar to btrfs in that it’s a volume manager (like LVM), RAID manager (like md), and filesystem in one. My system runs LVM, and inside LVM, I have two ZFS “pools” (volume groups): one, called rpool, that is unencrypted and holds mainly the operating system; and the other, called crypt, that is stacked atop LUKS. ZFS on Linux doesn’t yet have built-in crypto, which is why LVM is even in the picture here (to separate out the SSD at a level above ZFS to permit parts of it to be encrypted). This is a bit of an antiquated setup for me; as more systems have AES-NI, I’m going to everything except /boot being encrypted.

Anyhow, inside rpool is the / filesystem, /var, and /usr. Inside /crypt is /tmp and /home.

Initially, I tried to just boot it, knowing that systemd is supposed to work with LSB init scripts, and ZFS has init scripts with carefully-planned dependencies. This was evidently not working, perhaps because /lib/systemd/systemd/ It turns out that systemd has a few assumptions that turn out to be less true with ZFS than otherwise. ZFS filesystems are normally not mounted via /etc/fstab; a ZFS pool has internal properties about which dataset gets mounted where (similar to LVM’s actions after a vgscan and vgchange -ay). Even though there are ordering constraints in the units, systemd is writing files to /var before /var gets mounted, resulting in the mount failing (unlike ext4, ZFS by default will reject an attempt to mount over a non-empty directory). Partly this due to the debian-fixup.service, and partly it is due to systemd reacting to udev items like backlight.

This problem was eventually worked around by doing zfs set mountpoint=legacy rpool/var, and then adding a line to fstab (“rpool/var /var zfs defaults 0 2”) for /var and its descendent filesystems.

This left the problem of /tmp; again, it wasn’t getting mounted soon enough. In this case, it required crypttab to be processed first, and there seem to be a lot of bugs in the crypttab processing in systemd (more on that below). I eventually worked around that by adding After=cryptsetup.target to the zfs-import-cache.service file. For /tmp, it did NOT work to put it in /etc/fstab, because then it tried to mount it before starting cryptsetup for some reason. It probably didn’t help that the system’s cryptdisks.service is a symlink to /dev/null, a fact I didn’t realize until after a lot of needless reboots.

Anyhow, one thing I stumbled across was poor console control with systemd. On numerous occasions, I had things like two cryptsetup processes trying to read a password, plus an emergency mode console trying to do so. I had this memorable line of text at one point:

(or type Control-D to continue): Please enter passphrase for disk athena-crypttank (crypt)! [ OK ] Stopped Emergency Shell.

And here we venture into unsatisfying territory with systemd. One answer to this in IRC was to install plymouth, which apparently serializes console I/O. However, plymouth is “an attractive boot animation in place of the text messages that normally get shown.” I don’t want an “attractive boot animation”. Nevertheless, neither systemd-sysv nor cryptsetup depends on plymouth, so by default, the prompt for a password at boot is obscured by various other text.

Worse, plymouth doesn’t support serial consoles, so at the moment booting a system that uses LUKS with systemd over a serial console is a matter of blind luck of typing the right password at the right time.

In the end, though, the system booted and after a few more tweaks, the backlight buttons do their thing again. Whew!

Update 2014-10-13: uau pointed out that Plymouth is more than a bootsplash, and can work with serial consoles, despite the description of the package. I stand corrected on that. (It is still the case, however, that packages don’t depend on it where they should, and the default experience for people using cryptsetup is not very good.)

First impressions of systemd, and they’re not good

Well, I finally bit the bullet. My laptop, which runs jessie, got dist-upgraded for the first time in a few months. My brightness keys stopped working, and it no longer would suspend to RAM when the lid was closed, and upon chasing things down from XFCE to policykit, eventually it appears that suddenly major parts of the desktop breaks without systemd in jessie. Sigh.

So apt-get install systemd-sysv (and watch sysvinit-core get uninstalled) and reboot.

Only, my system doesn’t come back up. In fact, over several hours of trying to make it boot with systemd, it failed in numerous spectacular and hilarious (or, would be hilarious if my laptop would boot) ways. I had text obliterating the cryptsetup password prompt almost every time. Sometimes there were two processes trying to read a cryptsetup password at once. Sometimes a process was trying to read that while another one was trying to read an emergency shell password. Many times it tried to write to /var and /tmp before they were mounted, meaning they *wouldn’t* mount because there was stuff there.

I noticed it not doing much with ZFS, complaining of a dependency loop between zfs-mount and $local-fs. I fixed that, but it still wouldn’t boot. In fact, it simply hung after writing something about wall passwords.

I’ve dug into systemd, finding a “unit generator for fstab” (whatever the hack that is, it’s not at all made clear by systemd-fstab-generator(8)).

In some cases, there’s info in journalctl, but if I can’t even get to an emergency mode prompt, the practice of hiding all stdout and stderr output is not all that pleasant.

I remember thinking “what’s all the flaming about?” systemd wasn’t my first choice (I always thought “if it ain’t broke, don’t fix it” about sysvinit), but basically ignored the thousands of messages, thinking whatever happens, jessie will still boot.

Now I’m not so sure. Even if the thing boots out of the box, it seems like the boot process with systemd is colossally fragile.

For now, at least zfs rollback can undo upgrades to 800 packages in about 2 seconds. But I can’t stay at some early jessie checkpoint forever.

Have we made a vast mistake that can’t be undone? (If things like even *brightness keys* now require systemd…)

The Thrill and Stress of Too Many Hobbies

Today, 4PM. Jacob and Oliver excitedly peer at the box in our kitchen – a really big box, taller than them. Inside is is the first model airplane I’d ever purchased. The three of us hunkered down on the kitchen floor, opened the box, unpacked the parts, examined the controller, and found the manual with cryptic assembly directions. Oliver turned some screws while Jacob checked out the levers on the controllers. Then they both left for a bit to play with their toy buses.

A little while later, the three of us went outside. It was too windy to fly. I had never flied an RC plane before — only RC quadcopters (much easier to fly), and some practice time on an RC simulator. But the excitement was too much. So out we went, and the plane took off perfectly, climbed, flew over the trees, and circled above our heads at my command. I even managed a good landing in the wind, despite about 5 aborted attempts due to coming in too high, wrong angle, too fast, or last-minute gusts of wind throwing everything off. I am not sure how I pulled that all off on my first flight, but somehow I did! It was thrilling!

I’ve had a lot of hobbies in my life. Computers have run through many of them; I learned Pascal (a programming language) at about the same time I learned cursive handwriting and started with C at around age 10. It was all fun. I’ve been a Debian developer for some 18 years now, and have written a lot of code, and even books about code, over the years.

Photography, music, literature, history, philosophy, and theology have been interests for quite some time as well. In the last few years, I’ve picked up amateur radio, model aircraft, etc. And last month, Laura led me into Ada’s Technical Books during our visit to Seattle, resulting in me getting interested in Arduino. (The boys and I have already built a light-activated crossing gate for their HO-gauge model trains, and Jacob can now say he’s edited a few characters of C!)

Sometimes I find ways to merge hobbies; I’ve set up all sorts of amateur radio systems on Linux, take aerial photographs, and set up systems to stream music in my house.

But I also have a lot less time for hobbies overall than I once did; other things in life, such as my children, are more important. Some of the code I once worked on actively I no longer use or maintain, and I feel guilty about that when people send bug reports that I have no interest in fixing anymore.

Sometimes I feel a need to cut down, and perhaps have; and then, I get an interest in RC aircraft and find an airplane that is great for a beginner and fairly inexpensive.

Perhaps it is the curse of being a curious person living in an interesting world. Do any of the rest of you have a large number of hobbies? How do you feel about that?

2AM to Seattle

Monday morning, 1:45AM.

Laura and I walk into the boys’ room. We turn on the light. Nothing happens. (They’re sound sleepers.)

“Boys, it’s time to get up to go get on the train!”

Four eyes pop open. “Yay! Oh I’m so excited!”

And then, “Meow!” (They enjoy playing with their stuffed cats that Laura got them for Christmas.)

Before long, it was out the door to the train station. We even had time to stop at a donut shop along the way.

We climbed into our family bedroom (a sleeping car room on Amtrak specifically designed for families of four), and as the train started to move, the excitement of what was going on crept in. Yes, it’s 2:42AM, but these are two happy boys:

Jacob and Oliver love trains, and this was the beginning of a 3-day train trip from Newton to Seattle that would take us through Kansas, Colorado, the Rocky Mountains of New Mexico, Arizona, Los Angeles, up the California coast, through the Cascades, and on to Seattle. Whew!

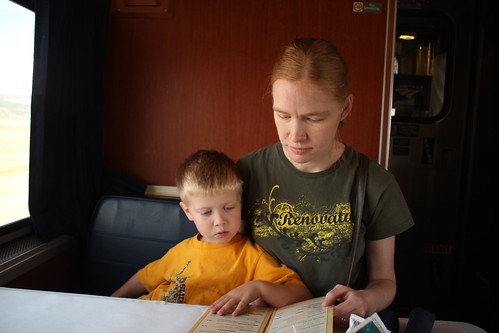

Here we are later that morning before breakfast:

Here’s our train at a station stop in La Junta, CO:

And at the beautiful small mountain town of Raton, NM:

Some of the passing scenery in New Mexico:

Through it all, we found many things to pass the time. I don’t think anybody was bored. I took the boys “exploring the train” several times — we’d walk from one end to the other and see what all was there. There was always the dining car for our meals, the lounge car for watching the passing scenery, and on the Coast Starlight, the Pacific Parlor Car.

Here we are getting ready for breakfast one morning.

Getting to select meals and order in the “train restaurant” was a big deal for the boys.

Laura brought one of her origami books, which even managed to pull the boys away from the passing scenery in the lounge car for quite some time.

Origami is serious business:

They had some fun wrapping themselves around my feet and challenging me to move. And were delighted when I could move even though they were trying to weight me down!

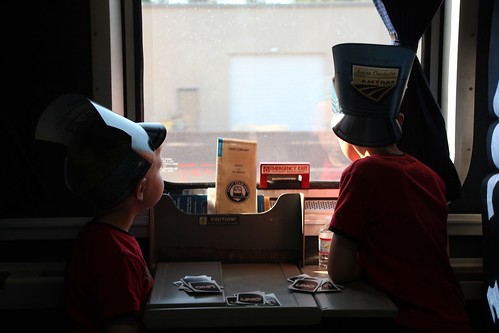

Several games of Uno were played, but even those sometimes couldn’t compete with the passing scenery:

The Coast Starlight features the Pacific Parlor Car, which was built over 50 years ago for the Santa Fe Hi-Level trains. They’ve been updated; the upper level is a lounge and small restaurant, and the lower level has been turned into a small theater. They show movies in there twice a day, but most of the time, the place is empty. A great place to go with little boys to run around and play games.

The boys and I sort of invented a new game: roadrunner and coyote, loosely based on the old Looney Tunes cartoons. Jacob and Oliver would be roadrunners, running around and yelling “MEEP MEEP!” Meanwhile, I was the coyote, who would try to catch them — even briefly succeeding sometimes — but ultimately fail in some hilarious way. It burned a lot of energy.

And, of course, the parlor car was good for scenery-watching too:

We were right along the Pacific Ocean for several hours – sometimes there would be a highway or a town between us and the beach, but usually there was nothing at all between us and the coast. It was beautiful to watch the jagged coastline go by, to gaze out onto the ocean, watching the birds — apparently so beautiful that I didn’t even think to take some photos.

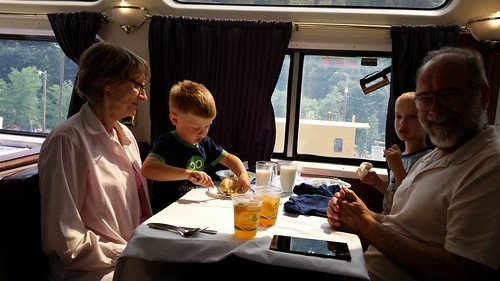

Laura’s parents live in California, and took a connecting train. I had arranged for them to have a sleeping car room near ours, so for the last day of the trip, we had a group of 6. Here are the boys with their grandparents at lunch Wednesday:

We stepped off the train in Seattle into beautiful King Street Station.

Our first day in Seattle was a quiet day of not too much. Laura’s relatives live near Lake Washington, so we went out there to play. The boys enjoyed gathering black rocks along the shore.

We went blackberry picking after that – filled up buckets for a cobbler.

The next day, we rode the Seattle Monorail. The boys have been talking about this for months — a kind of train they’ve never been on. That was the biggest thing in their minds that they were waiting for. They got to ride in the very front, by the operator.

Nice view from up there.

We walked through the Pike Market — I hadn’t been in such a large and crowded place like that since I was in Guadalajara:

At the Seattle Aquarium, we all had a great time checking out all the exhibits. The “please touch” one was a particular hit.

Walking underneath the salmon tank was fun too.

We spent a couple of days doing things closer to downtown. Laura’s cousin works at MOHAI, the Museum of History and Industry, so we spent a morning there. The boys particularly enjoyed the old periscope mounted to the top of the building, and the exhibit on chocolate (of course!)

They love any kind of transportation, so of course we had to get a ride on the Seattle Streetcar that comes by MOHAI.

All weekend long, we had been noticing the seaplanes taking off from Lake Washington and Lake Union (near MOHAI). So finally I decided to investigate, and one morning while Laura was doing things with her cousin, the boys and I took a short seaplane ride from one lake to another, and then rode every method of transportation we could except for ferries (we did that the next day). Here is our Kenmore Air plane:

The view of Lake Washington from 1000 feet was beautiful:

I think we got a better view than the Space Needle, and it probably cost about the same anyhow.

After splashdown, we took the streetcar to a place where we could eat lunch right by the monorail tracks. Then we rode the monorail again. Then we caught a train (it went underground a bit so it was a “subway” to them!) and rode it a few blocks.

There is even scenery underground, it seems.

We rode a bus back, and saved one last adventure for the next day: a ferry to Bainbridge Island.

Laura and I even got some time to ourselves to go have lunch at an amazing Greek restaurant to celebrate a year since we got engaged. It’s amazing to think that, by now, it’s only a few months until our wedding anniversary too!

There are many special memories of the weekend I could mention — visiting with Laura’s family, watching the boys play with her uncle’s pipe organ (it’s in his house!), watching the boys play with their grandparents, having all six of us on the train for a day, flying paper airplanes off the balcony, enjoying the cool breeze on the ferry and the beautiful mountains behind the lake. One of my favorites is waking up to high-pitched “Meow? Meow meow meow! Wake up, brother!” sorts of sounds. There was so much cat-play on the trip, and it was cute to hear. I have the feeling we won’t hear things like that much more.

So many times on the trip I heard, “Oh dad, I am so excited!” I never get tired of hearing that. And, of course, I was excited, too.

Beautiful Earth

Sometimes you see something that takes your breath away, maybe even makes your eyes moist. That happened when I saw this photo one morning:

Photography has been one of my hobbies since I was a child, and I’ve enjoyed it all these years. Recently I was inspired by the growing ease of aerial photography using model aircraft, and now can fly two short-range RC quadcopters. That photo came from the first one, and despite being a low-res 1280×720 camera, tha image of our home in the yellow glow of sunrise brought a deep feeling a beauty and peace.

Somehow seeing our home surrounded by the beauty of the immense wheat fields and green pastures drives home how small we all are in comparison to the vastness of the earth, and how lucky we are to inhabit this beautiful planet.

As the sun starts to come up over the pasture, the only way you can tell the height of the grass at 300ft is to see the shadow it makes on the mowed pathway Laura and I use to get down to the creek.

This is a view of our church in a small town nearby — the church itself is right in the center of the photo. Off to the right, you see the grain elevators that can be seen for miles across the Kansas prairie, and of course the fields are never all that far off in the background.

Here you can see the quadcopter taking off from the driveway:

And here it is flying over my home church out in the country:

That’s the country church, at the corner of two gravel roads – with its lighted cross facing east that can be seen from a mile away at night. To the right is the church park, and the green area along the road farther back is the church cemetery.

Sometimes we get in debates about environmental regulations, politics, religion, whatever. We hear stories of missiles, guns, and destruction. It is sad, this damage we humans inflict on ourselves and our earth. Our earth — our home — is worth saving. Its stunning beauty from all its continents is evidence enough of that. To me, this photo of a small corner of flat Kansas is proof enough that the home we all share deserves to be treated well, and saved so that generations to come can also get damp eyes viewing its beauty from a new perspective.

The Heights of Coronado

Near the beautiful Swedish town of Lindsborg, Kansas, there stands a hill known as Coronado Heights. It lies in the midst of the Smoky Hills, named for the smoke-like mist that sometimes hangs in them. We Kansans smile our usual smile when we tell the story of how Francisco Vásquez de Coronado famously gave up his search for gold after reaching this point in Kansas.

Anyhow, it was just over a year ago that Laura, Jacob, Oliver, and I went to Coronado Heights at the start of summer, 2013 — our first full day together as a family.

Atop Coronado Heights sits a “castle”, an old WPA project from the 1930s:

The view from up there is pretty nice:

And, of course, Jacob and Oliver wanted to explore the grounds.

As exciting as the castle was, simple rocks and sand seemed to be just as entertaining.

After Coronado Heights, we went to a nearby lake for a picnic. After that, Jacob and Oliver wanted to play at the edge of the water. They loved to throw rocks in and observe the splash. Of course, it pretty soon descended (or, if you are a boy, “ascended”) into a game of “splash your brother.” And then to “splash Dad and Laura”.

Fun was had by all. What a wonderful day! Writing the story reminds me of a little while before that — the first time all four of us enjoyed dinner and smores at a fire by our creek.

Jacob and Oliver insisted on sitting — or, well, flopping — on Laura’s lap to eat. It made me smile.

(And yes, she is wearing a Debian hat.)